Let’s produce the data to Kafka topic "json_data_topic". If a key column is not specified, then a null valued key column will be automatically added. Note that In order to write Spark Streaming data to Kafka, value column is required and all other fields are optional.Ĭolumns key and value are binary in Kafka hence, first, these should convert to String before processing. | id|firstname|middlename|lastname|dob|gender|salary| | id|firstname|middlename|lastname| dob|gender|salary| As you input new data(from step 1), results get updated with Batch: 1, Batch: 2 and so on. When you run this program, you should see Batch: 0 with data. After download, import project to your favorite IDE and change Kafka broker IP address to your server IP on SparkStreamingConsumerKafkaJson.scala program. The complete Streaming Kafka Example code can be downloaded from GitHub. Val personDF = lect(from_json(col("value"), schema).as("data")) Now, extract the value which is in JSON String to DataFrame and convert to DataFrame columns using custom schema. Val personStringDF = df.selectExpr("CAST(value AS STRING)")

Since the value is in binary, first we need to convert the binary value to String using selectExpr()

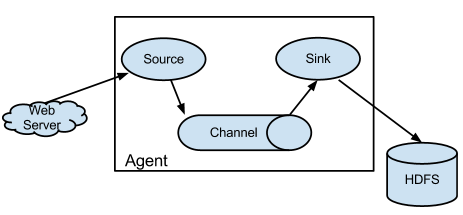

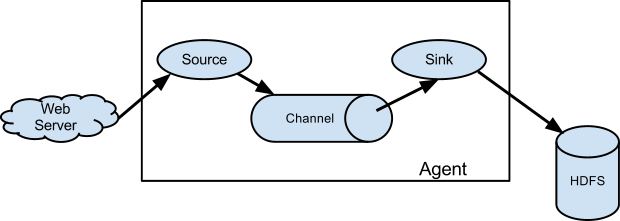

The returned DataFrame contains all the familiar fields of a Kafka record and its associated metadata. Since there are multiple options to stream from, we need to explicitly state from where you are streaming with format("kafka") and should provide the Kafka servers and subscribe to the topic you are streaming from using the option.ĭf.printSchema() returns the schema of streaming data from Kafka. option("startingOffsets", "earliest") // From starting Option startingOffsets earliest is used to read all data available in the Kafka at the start of the query, we may not use this option that often and the default value for startingOffsets is latest which reads only new data that’s not been processed. Spark Streaming uses readStream() on SparkSession to load a streaming Dataset from Kafka. You use the version according to yo your Kafka and Scala versions In order to streaming data from Kafka topic, we need to use below Kafka client Maven dependencies. broker-list localhost:9092 -topic json_topicĢ. Note: By default when you write a message to a topic, Kafka automatically creates a topic however, you can also create a topic manually and specify your partition and replication factor. Just copy one line at a time from person.json file and paste it on the console where Kafka Producer shell is running. First, let’s produce some JSON data to Kafka topic "json_topic", Kafka distribution comes with Kafka Producer shell, run this producer and input the JSON data from person.json.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed